| Skeleton | Bones | Skinning | Combined | |

|---|---|---|---|---|

Clothed Human 4D Gaussians | ||||

Quadruped Mesh · Bucks | ||||

Garment Mesh · Cloth | ||||

Dragon Mesh · Biped |

| Skeleton | Bones | Skinning | Combined | |

|---|---|---|---|---|

Clothed Human 4D Gaussians | ||||

Quadruped Mesh · Bucks | ||||

Garment Mesh · Cloth | ||||

Dragon Mesh · Biped |

Rigging animatable characters, such as quadrupeds and clothed humans, presents a fundamental challenge: balancing intuitive control with deformation fidelity. While kinematic skeletons offer intuitive control, natural surface deformations involve significant non-rigid dynamics—such as loose clothing and soft tissues—that cannot be easily captured by skeletons alone. Free-form bones, that conform closely to the surface, can effectively capture non-rigid deformations, but lack a kinematic structure necessary for intuitive control. Then how about combining both to achieve the best of both worlds? Thus, we propose a Scaffold-Skin Rigging System, termed “skelebones”, with three core steps: (1) Bones: compress temporally-consistent deformable Gaussians into free-form bones, approximating non-rigid surface deformations; (2) Skeleton: extract a Mean Curvature Skeleton from canonical Gaussians and refine it temporally, ensuring a category-agnostic, motion-adaptive, and topology-correct kinematic structure; (3) Binding: bind the skeleton and bones via non-parametric partwise motion matching, synthesizing novel bone motions by matching, retrieving, and blending existing ones. Collectively, these three steps enable us to compress the Level of Dynamics of the reconstructed Gaussian sequences into compact skelebones that are both controllable and expressive. We validate our approach on both synthetic and real-world datasets, achieving significant improvements in reanimation performance across unseen poses—with 17.3% PSNR gains over Linear Blend Skinning (LBS) and 21.7% over Bag-of-Bones (BoB)—while maintaining excellent reconstruction fidelity, particularly for characters exhibiting complex non-rigid surface dynamics. Our Partwise Motion Matching algorithm demonstrates strong generalization to both Gaussian and mesh representations, even under low-data regimes (~1000 frames), achieving 48.4% RMSE improvement over robust LBS and outperforming GRU- and MLP-based learning methods by >20%. Code will be made publicly available for research purposes.

Pipeline Overview. Given a monocular or multi-view video, our method reconstructs a consistent 4DGS, extracts the inner skeleton via curve skeletonization and the outer free-form bones via SSDR, together forming “skelebones”, which are then used to build a motion database.

Inner Skeleton Initialization. We first extract the curve skeleton (A) of the object in the canonical space. Then we estimate the joint locations on the curve skeleton through skinning analysis. Specifically, we project the skinning weights of the 3D points onto the curve skeleton (B), and then identify positions along the 1D curve where neighboring skinning weights exhibit the highest similarity as potential joint locations (C). Finally, we traverse the curve skeleton using Depth-First Search (DFS) to construct the kinematic tree (D).

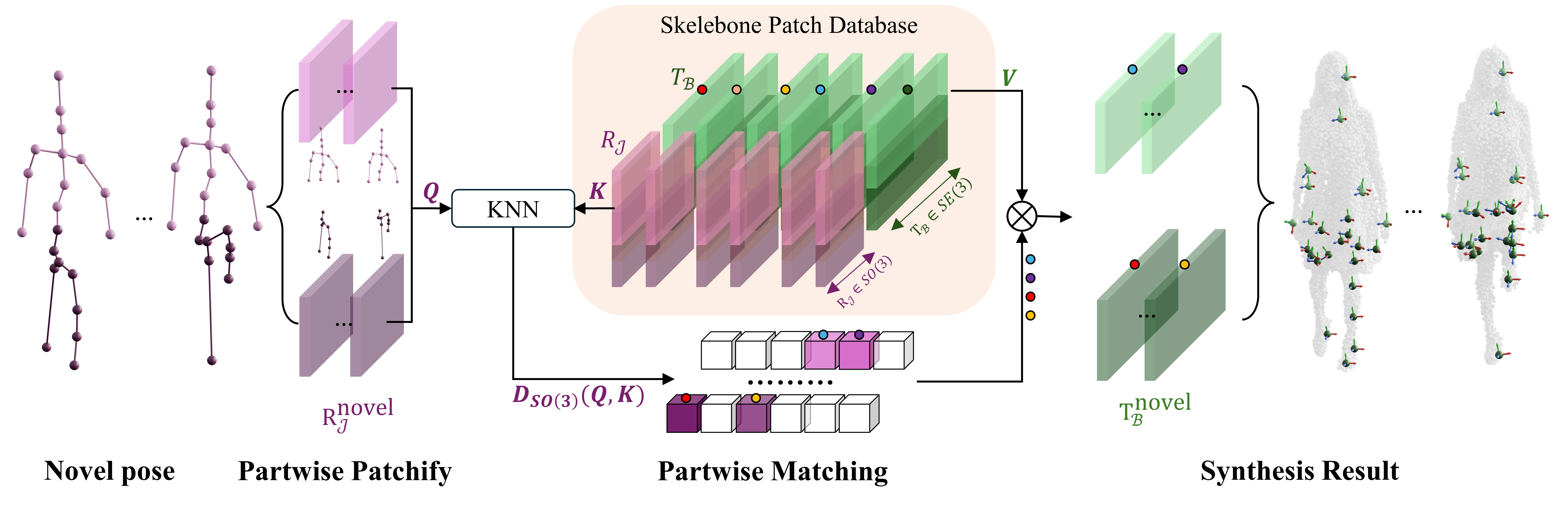

Partwise Motion Matching (PartMM). Given a novel inner-skeleton pose sequence, we animate skelebones by synthesizing outer-bone motion via part-wise matching. Our method: (a) decomposes the kinematic tree into multiple parts (shown as two parts; user-defined in practice); (b) extracts part-wise motion patches RJnovel from the novel pose sequence; (c) queries these patches against a pre-built motion database to retrieve similar patches to recompile, and then perform part-level spatial alignment. Iterating step (c) yields the final motion TBnovel.

We thank Ling-Hao Chen for fruitful discussions on motion matching for retargeting, which inspired our shift from learning-based to matching-based approaches; Peizhuo Li and Gengshan Yang for insightful feedback during the literature survey; Siyuan Yu for testing and visualization support; Yue Chen and Xingyu Chen for helpful suggestions on figure design; the members of Endless AI Lab for their discussions and proofreading; and the ActorsHQ, DNA-Rendering, DeformingThings4D, and VTO dataset teams for providing the datasets.

We also gratefully acknowledge the following open-source projects:

| BANMo | Deformable 4D reconstruction from casual videos |

| DressRecon | Freeform 4D human reconstruction |

| Dynamic 3D Gaussians | Persistent dynamic Gaussian tracking |

| RigGS | Gaussian-based articulated rigging |

| Mean Curvature Skeleton | Curve skeleton extraction via mean curvature flow |

| DemBones | Smooth skinning decomposition with rigid bones |

| Motion2Motion | Cross-topology motion transfer |

| Drop the GAN | Patch nearest-neighbor generative framework |

| GenMM | Generative motion matching from single examples |

This work is supported by the Research Center for Industries of the Future (RCIF) at Westlake University and the Westlake Education Foundation.